The Enterprise Intelligence Imperative: How AI Tooling Unlocks Sustainable Capability for U.S Enterprises

27 January, 2026

Executive Summary

Over the past decade, enterprises have dispersed execution across global teams but innovation and strategic decision-making have remained concentrated. The bottleneck has never been talent; it has been the inability to internalize, scale, and retain engineering intelligence without accumulating technical and organizational debt.

Historically, disruption was framed as the outcome of ideas. Today, it is increasingly defined by the tools that bring those ideas to life. As artificial intelligence becomes a foundational layer of software development, enterprises face a critical tension: how to accelerate delivery without eroding engineering quality. In many cases, speed has been prioritized over sustainability, trading immediacy for long-term capability.

AI tooling offers a path forward.

When applied as a capability layer, not a patch on legacy processes, AI augments engineering reasoning, preserves institutional knowledge, and reduces long-term debt. Modern platforms enable enterprises to mature and scale software development without repeated rebuild cycles, allowing innovation capacity to compound rather than reset. The result is a new kind of leverage that resolves the common blur spot. It moves tools beyond scaffolds to become levers for business intelligence, ensuring software evolution is regenerative, qualitatively disruptive, and inherent to the business DNA.

Architecture over Immediacy: The Emergence of AI Tooling

The longevity and success of an enterprise hinges on iterative capability building, grounded in agile and forward-focused engineering practices (Gartner et al. 2025). It is important to understand what capability entails; talent, infrastructure, data and tools must meld to form the full picture of enterprise capability – move one aspect out of focus and the picture distorts.

To sustain incremental change, companies must develop software capabilities that enable internal innovation, amplifying ideation into deployment while supporting cyclical refinement without external dependencies (Forrester and Scipio 2023). As AI becomes a foundational layer of software development, engineers are emerging as central drivers of innovation and strategic decision-making; hence redefining the core of the modern enterprise.

The Real Bottleneck: Capability, Not Cost

A microcosmic view of enterprise operations reveals why excellence often stalls: despite the rise of AI-enabled processes, engineers often remain at the tail end of feedback loops, constrained by approvals and revision cycles. The bottleneck is not cost or infrastructure; it is a hierarchical structure that limits engineers’ ability to make decisions. Over time, knowledge decays within teams, stalling rigor, stagnating innovation, and necessitating external intervention to rethink strategy (McKinsey 2025).

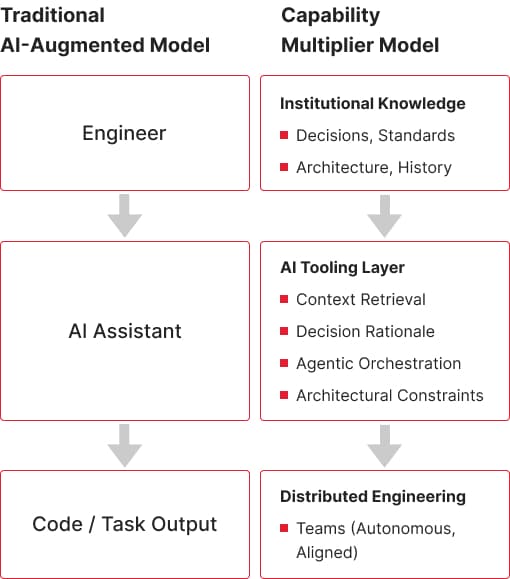

AI changes this dynamic. Rather than patching automation onto fragmented intelligence, AI-powered tools enable engineers to accelerate delivery while building knowledge reservoirs that inform each development cycle, mature product pipelines, and generate lasting value. By embedding AI directly into workflows, enterprises can preserve institutional knowledge, codify architectural rationale, and create repeatable innovation cycles (McKinsey 2025).

The AI imperative is steep, but achievable. AI tooling does not accelerate innovation simply by speeding up tasks; it reshapes where reasoning, context, and decision authority reside. When tools preserve institutional knowledge, surface constraints, and guide judgment, innovation becomes repeatable rather than episodic. Forward-focused enterprises empower engineers, flatten hierarchies, and remove barriers that prevent domain-tuned talent from iterating strategically.

From Task Acceleration to Capability Layer

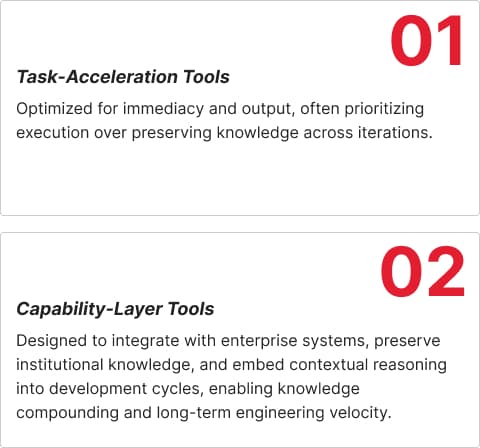

Widening enterprise capability requires more than adding AI to workflows. While many platforms accelerate coding, automate processes, and support QA, enterprises face a bifurcation of needs:

These distinctions highlight why embedding intelligence in tooling; rather than merely executing tasks is essential. Enterprise examples later in this paper illustrate how AI transforms localized productivity into distributed, compounding capability.

Beyond Automation: How Internal Capabilities Enhance Innovative Output

Enterprise longevity can no longer be secured through automation alone. As AI becomes foundational to software development and decision-making, its real value emerges not from accelerating tasks, but from reshaping how organizations reason, coordinate, and evolve. When AI tooling is embedded into workflows as a medium for context, rationale, and continuity, it enables enterprises to distribute capability without fragmenting coherence.

Examples Across Scales:

- Figma demonstrates how shared tooling encodes collective context, allowing teams to extend systems coherently rather than reinventing intent. Its design environment enables real-time collaboration, shared libraries, and component systems that surface architectural decisions and reusable patterns across teams. Teams adopting Figma’s shared component systems report double-digit reductions in design-to-development handoff time and fewer iteration cycles due to misalignment (Figma 2023).

- Shopify embeds architectural knowledge into development workflows through ROAST, its internal AI-powered experimentation and testing platform. By capturing contextual data, system dependencies, and architectural rationale, ROAST enables engineers to anticipate the impact of changes before execution. This reduces onboarding time for new engineers, lowers escalations to centralized architecture teams, and ensures that operational knowledge is preserved across projects. With ROAST, Shopify demonstrates how AI-enabled tooling can transform localized productivity into distributed enterprise capability, codifying knowledge as a durable asset rather than leaving it fragmented across teams (Shopify 2025).

- Google Vertex AI enables enterprises to coordinate AI agents across systems while preserving context, business logic, and operational knowledge. Its Agent Development Kit (ADK) and Agent Engine allow multi-agent systems to reason, plan, and act collaboratively, transforming localized insights into distributed enterprise capability. Integration with enterprise data sources and the Model Context Protocol (MCP) ensures intelligence is retained and reused across teams. Real-world examples, like Revionics and Renault, show agents applying contextual knowledge to automate workflows and optimize decision-making (Google Cloud and Tiwary 2025).

Across these cases, AI tooling functions as infrastructure for decision-making rather than a shortcut for execution. Capability scales not through headcount growth, but by preserving and propagating intelligence across teams and time.

AI Tooling as Structural Rewiring

AI tooling represents a structural rewiring of enterprise innovation. Where capability was once constrained by geography, hierarchy, and access to expertise, AI-enabled tooling redistributes cognitive leverage; expanding who can contribute, how insight is preserved, and where value is created. This shift is not neutral; it elevates underrepresented talent, enables distributed teams to operate with shared context, and transforms institutional knowledge from a fragile byproduct into a compounding asset. Enterprises embedding AI deeply into capability centers are defining the next generation of value creation.

As AI tooling redistributes cognitive leverage and elevates distributed teams, enterprises require a unifying structure to capture, retain, and compound this intelligence. This structural evolution manifests as the Enterprise Intelligence Layer; a framework that transforms ephemeral insights into enduring organizational knowledge.

The Enterprise Intelligence Layer: Where Capability Becomes Intelligence

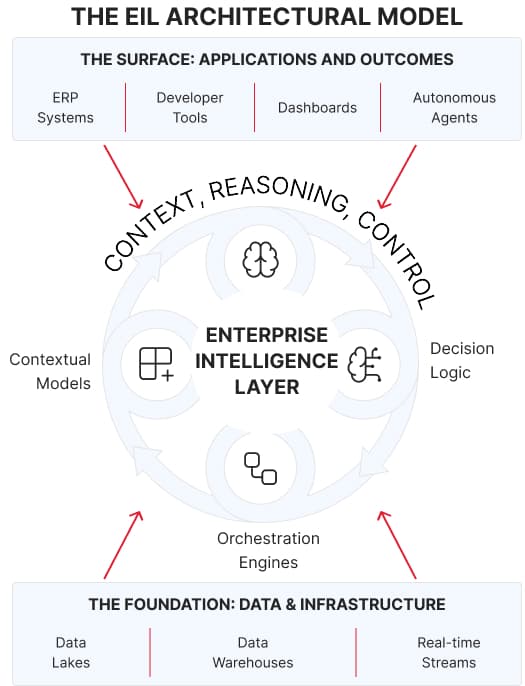

As AI tooling reshapes how innovation is distributed, a new structural layer is emerging inside forward-focused enterprises: the Enterprise Intelligence Layer (EIL).

The EIL is not a single platform, model, or product. It is the integrated intelligence fabric that forms when AI systems, workflows, and human expertise operate as a unified capability; capturing context, preserving institutional knowledge, and enabling learning to persist across teams, time, and systems. In an environment where technological change outpaces organizational adaptation, this layer determines whether intelligence compounds or decays.

Where traditional enterprise systems execute transactions and workflows, the EIL governs how intelligence is created, retained, and reused as regenerative tissue to the enterprise organism. The essential growing risk to the enterprise remains: as AI adoption accelerates, speed is often mistaken for progress, while architectural rationale and decision context are lost to rapid iteration and rework. The EIL shifts AI from task acceleration to capability formation; ensuring that insights generated in one cycle strengthen decisions, systems, and outcomes in the next, rather than being

discarded or re-learned. For engineering velocity, the innovation wheels remain greased through every development lifecycle, within the enterprise engine.

Architectural Strata

Foundation: Data and Infrastructure

Structured and unstructured data, real-time streams, and secure operational access that ground intelligence in enterprise reality.

Intelligence Layer: Context, Reasoning, and Control

Contextual models (RAG, LLMs, SLMs), orchestration engines, and decision logic that bind AI outputs to business intent, operational constraints, and strategic direction, preventing fragmentation as systems scale.

Surface: Applications and Outcomes

Developer tools, dashboards, ERP systems, or autonomous agents where decisions are executed. Crucially, outcomes feed back into the intelligence layer, allowing learning, rationale, and constraints to be retained rather than lost.

Together, these layers transform AI from an isolated or plug-and-play capability into a durable enterprise asset; one that anchors innovation in accumulated knowledge, reduces systemic engineering waste, and enables enterprises to evolve with clarity rather than react at speed.

How AI Enables Autonomy: Tools Orchestrated by Engineering Logic

As AI enters enterprise-grade software development, the question is no longer whether teams should adopt intelligent tools, but which tools align with how the business intends to scale, govern change, and create value. AI tooling is not monolithic. Copilots, agent-driven systems, and enterprise development platforms each solve different problems, and their impact depends on how intentionally they are embedded into engineering workflows.

Consider a distributed product team responsible for a core customer-facing platform. As usage patterns shift, requirements evolve; not just in features, but in performance, compliance, and orchestration across services. In environments built for enterprise-grade development, engineers don’t rewrite or patch systems reactively. Instead, AI-enabled tooling surfaces architectural constraints, recommends refactoring paths, and simulates downstream impact before changes are deployed. Iteration becomes

continuous, informed, and reversible; allowing teams to scale functionality without destabilizing the system beneath it. This shift, from reactive change to intentional evolution, is where AI moves beyond assistance and into agency.

Comparative Framework: Task-Acceleration vs. Capability-Building

To illustrate how AI tooling and software development platforms differ in their impact on enterprise capability, the following table compares representative tooling archetypes. It demonstrates how each either reinforces localized output or builds a bridge toward knowledge compounding and architectural continuity:

From Assisted Intelligence to Enterprise Capability: The Maturity Crescendo

Most organizations initially perceive transformation at the stage of Assisted Intelligence, where tools surface patterns, support individual decisions, and generate situational recommendations. The experience is compelling: faster insights, sharper judgments, and reduced blind spots. Yet this stage is deceptive. The need to move beyond reactive engineering is a digital transformation imperative. Assisted intelligence remains localized and ephemeral unless brought into the living context of the system. Enterprises appear smarter, but they do not become smarter.

Transformation stalls until tools move from assisting decisions to participating in knowledge formation, entering the stage of Knowledge Compounding. Here, tools encode context; assumptions, rationale, dependencies, and outcomes are preserved across iterations. Engineers build atop prior reasoning, and intelligence lives within the system, not just the tool. The health of the organization remains intact, independent of personnel turnover or governance shifts. Each iteration strengthens the enterprise’s cognitive infrastructure, enabling capability to compactify over time.

At full maturity—Enterprise Capability—tools no longer just assist. They enable continuous, disciplined innovation grounded in business intelligence. Engineers reason with the system, extending and refining intelligence rather than bypassing it. Achieving this requires structural alignment and sovereign governance; intelligence becomes a force multiplier for organizational cognition.

Real Enterprise Use Cases: AI Tooling as a Capability Multiplier

To understand how sustained value creation materializes, we must look beyond specific platforms and toward the Capability Multipliers they represent. When categorized by their architectural impact, four distinct classes of tooling emerge, each corresponding to a stage on the enterprise intelligence maturity curve. These archetypes illustrate how tools can move beyond simple augmentation to holistically shape organizations into sovereign capability hubs.

I. Transactional AI Agents (Assisted Intelligence)

At the entry point of adoption are tools designed to augment individual reasoning within a narrow, ephemeral window. These AI-native development environments and transactional agents assist engineers with planning, automating repetitive syntax, and preparing initial pull requests.

- The Capability Shift: While these tools deliver significant acceleration—demonstrated by high plan approval rates and improved situational decision-making—the intelligence remains localized to the moment of use.

- The Strategic Limit: Architectural knowledge and cross-team learnings are rarely retained. These tools enhance individual throughput but are insufficient for building lasting institutional memory. They represent Assisted Intelligence: effective in the moment, but non-persistent.

II. Operational Agility Frameworks (Operational Intelligence)

The next evolution occurs when intelligence begins to persist within systems, capturing operational judgment rather than merely supporting individual decisions. This is exemplified by low-code internal application frameworks that allow teams to move from ephemeral insights to persistent, system-level knowledge.

- The Capability Shift: By embedding business rules, operational logic, and specialized workflows directly into the deployment platform, organizations create a living knowledge layer.

- The Strategic Value: In this model, intelligence evolves with the system rather than residing solely with individual contributors. Operational judgment is preserved and compounded across teams, enabling faster adaptation to shifting requirements and improving governance without the traditional overhead of manual documentation.

III. Architectural Modernization Ecosystems (Architectural Persistence)

As enterprises scale, the locus of intelligence must shift from workflow optimization to architecture-level persistence that transcends individual projects. This class of high-performance development platforms is used to replace aging legacy systems and build integrated, modern internal ecosystems at a speed previously unattainable.

- The Capability Shift: These platforms enable teams to implement reusable patterns, composable modules, and governed standards once, then reuse them repeatedly across the application landscape.

- The Strategic Value: This systemic memory transforms episodic software delivery into sustained capability building. By reinforcing architectural intent, these tools enable distributed teams to maintain consistency and reduce regressions. The resulting efficiency gains and rapid payback cycles reflect the high productivity advantages of embedding architectural intelligence into the enterprise tooling fabric.

IV. Multi-Divisional Intelligence Hubs (Institutionalizing Domain Intelligence)

At the highest stage of maturity, tooling begins to institutionalize domain intelligence, reaching the full crescendo of Sovereign Capability. This is achieved through ecosystems that empower thousands of employees across business and technical functions to participate directly in application development.

- The Capability Shift: This democratization brings domain expertise directly into the code and tooling logic, significantly reducing rework and aligning business needs with software outcomes.

- The Strategic Value: When domain logic is encoded systemically, intelligence becomes a durable asset that is refined and reused across business units. The organization no longer just automates isolated tasks; it builds a self-evolving mechanism where every new application enriches the collective intelligence fabric, generating millions in added value through improved process efficiency.

A New Class of Enterprise Tooling – Compounding Knowledge for Innovation

Viewed collectively, these industry archetypes offer more than validation; they reveal an acceleration path. Forward-thinking enterprises can see how capability matures as AI tooling evolves from assisting individual work to encoding operational judgment and preserving domain logic. Each step moves intelligence closer to the core of the organization, yet the challenge of continuity at scale remains the final frontier.

Modernization cannot rely on episodic upgrades or tool-driven acceleration. Sustainable advantage requires adaptive, intelligence-driven systems that learn, remember, and inform future decisions rather than resetting with every iteration. It was through this realization that Hyper became a core focus. What began as an internal effort to accelerate delivery quickly exposed a deeper structural need: enterprise capability does not live in faster execution alone, but in intelligence embedded at the center of the system, where context, rationale, and architectural intent persist to guide both strategy and innovation

Hyper — A Case Study in Capability Compounding

Hyper was developed in response to a common pattern in enterprise environments: systems built to meet evolving needs are repeatedly patched and extended, accumulating complexity while shedding context. Over time, development becomes reactive, and each new generation of engineers inherits functionality without rationale.

Rather than treating this as a documentation or governance problem, Hyper approached it as a capability architecture challenge.

From the outset, the platform was designed to embed contextual continuity directly into the development lifecycle. Requirements were treated as evolving signals rather than static inputs. Architectural intent, system logic, and decision rationale persisted across iterations, allowing software to mature through accumulation rather than reconstruction.

In practice, Hyper demonstrated alignment with the capability layers described earlier:

- Execution efficiency: Deterministic build patterns and reusable scaffolds reduced development cycle time for internal tools by approximately 2×.

- Architectural coherence: Engineers could reason about system intent and dependencies without centralized gatekeeping.

- Iterative capacity: Scaling occurred without proportional rework, supported by auditable change history and preserved design rationale.

- Autonomy and portability: Full code ownership avoided vendor dependency or lock-in. Engineers could command development processes through learned processes, rather than staying tied to external feedback.

- Onboarding and knowledge transfer: New engineers inherited knowledge directly from the system, rather than relying on ad hoc handovers.

These outcomes were not the result of automation alone. They reflect a shift in how intelligence is treated within the system, persisted, referenced, and extended rather than rediscovered.

Over time, Hyper’s internal impact revealed a broader implication; each iteration strengthened the system’s intelligibility rather than eroding it. Engineering velocity increased alongside institutional understanding, reducing reliance on external expertise and minimizing loss of context across organizational change.

How Capability Thrives Through Compounding Intelligence Systems

What Hyper revealed was not merely a faster development workflow, but a fundamentally different behavior of the system itself. Each iteration strengthened the platform’s ability to reason, adapt, and evolve, without losing context or accumulating hidden complexity. Intelligence was no longer consumed at the moment of execution; it was retained, refined, and reused.

This pattern, where learning persists beyond individual interactions and capability improves with every cycle, points to a broader class of systems now emerging across forward-looking enterprises. When the Enterprise Intelligence Layer (EIL) is embedded across an organization’s capability centers, particularly within AI-enabled Global Capability Centers and in-house AI labs, it gives rise to Compounding Intelligence Systems (CIS).

These systems are designed to accumulate learning over time, improving decisions, workflows, and innovation velocity with each interaction. Knowledge does not decay as teams change or projects conclude; instead, it compounds, becoming more precise, more contextual, and more valuable as it is reused.

Human expertise remains central. Engineers, researchers, and domain specialists contribute tribal knowledge, strategic judgment, and creative insight, while AI tooling amplifies, preserves, and redistributes that intelligence at scale. The result is a self-reinforcing cycle: capability generates knowledge, knowledge strengthens tooling, and tooling accelerates capability.

It is within this architectural and operational context that leading enterprises are deploying AI, not as isolated productivity tools, but as the foundation of a new intelligence-driven operating model.

Beyond Assemblage: Building Inherent Enterprise Capability

The dominant enterprise reflex in an AI-accelerated landscape is speed. New tools are adopted, workflows are automated, and solutions are layered rapidly to demonstrate progress. In the short term, this produces output. In the long term, it produces erosion.

Plug-and-play innovation treats software as an interchangeable surface rather than a system of accumulated understanding. Each new tool solves a localized problem but sheds context, architectural rationale is implicit, business assumptions remain undocumented, and engineering decisions are revisited repeatedly. Intelligence fragments across platforms, vendors, and teams. What remains is functionality without memory.

This is how engineering debt is truly incurred; not simply through imperfect code, but through the loss of reasoning. As systems scale, teams spend increasing effort reinterpreting past decisions, reconciling mismatched abstractions, and rebuilding context that no longer exists. Speed gives way to iteration loops. Execution accelerates while understanding decays (Woods 2018).

Assembled capability optimizes for delivery. Built capability optimizes for learning.

Enterprises that build capability internally do so to reverse this erosion. By embedding intelligence directly into systems; capturing why decisions were made, not just what was shipped, each iteration strengthens the organization. Knowledge becomes cumulative. Architectural intent persists. Engineering effort moves forward instead of circling back.

AI tooling is decisive only when it is structural. When embedded as a capability layer rather than an external accelerator, AI systems preserve context, surface rationale, and redistribute learning across teams. Human judgment remains central, but it no longer

evaporates. Engineers are not just shipping solutions; they are extending a living system of intelligence.

This is the difference between reacting to change and evolving through it.

Why Knowledge Will Govern the Next Era of Enterprise Intelligence

In the coming decade, enterprise advantage will not be defined by who adopts AI first, but by which organizations retain clarity as their systems evolve. When tools change, architectures shift, and teams rotate, only enterprises that preserve knowledge will compound intelligence rather than rebuild it.

Sovereign enterprise capability relies on knowledge as the asset that compounds without decay. Preserved intelligence ensures that decisions inform future decisions, patterns evolve into architectures, and systems grow more coherent as they scale. AI tooling, designed as infrastructure, becomes the backbone enabling learning to endure across time, teams, and technology cycles. Innovation is no longer reactive or tool-driven; it is guided by accumulated understanding.

Engineers—the heartbeat of the enterprise—operate with clarity, reasoning across systems, adapting to shifting demands, and implementing change without sacrificing coherence. Research feeds execution; execution reinforces learning. The organization advances with intent.

Enterprises that rely on assembled innovation move in widening loops of rework and reinvention. Those that build internal capability move with trajectory. Their systems remember, adapt, and evolve. By enabling engineers to act as architects of change, enterprises fortify knowledge, processes, and lessons that endure.

This is how software stops being ephemeral and becomes institutional intelligence; enabling enterprises to be change drivers, not service providers.

Choose What Your Systems Remember

You can keep assembling solutions; adding tools, moving faster, and absorbing the hidden cost of context loss and engineering waste. Or you can build capability deliberately by embedding AI tooling as a knowledge-preserving layer that allows intelligence to compound.

The enterprises that lead the AI era will not be the fastest, but the ones whose systems grow wiser with every iteration.

Build for compounding intelligence. Start now: Pilot one internal AI capability designed to learn, not just execute. Contact Us

Bibliography

- Deloitte, Kelly Raskovich, Bill Briggs, et al. 2024. “The Intelligent Core: AI Changes Everything for Core Modernization.” Deloitte, December.

- Figma. 2023. Figma State of the Designer Report. https://static.figma.com/uploads/a6d8653ecc00e95eb4e060ff5c86b4320859d4b1

- Forrester, and Hannibal Scipio. 2023. The Partner Relationship Management Landscape, Q2 2023. https://www.forrester.com/report/ai-in-enterprise-software-development/RES179249

- Gartner, Matt LoDolce, and Meghan Moran. 2025. “Gartner Predicts 80% of Enterprise Software and Applications Will Be Multimodal by 2030, Up from Less Than 10% in 2024.” Gartner.

- Google Cloud, and Saurabh Tiwary. 2025. “Vertex AI Offers New Ways to Build and Manage Multi-Agent Systems.” Google Cloud, April 10.

- McKinsey. 2025. How an AI-Enabled Software Product Development Life Cycle Will Fuel Innovation. https://www.mckinsey.com/industries/technology-media-and-telecommunications/our-insights/how-an-ai-enabled-software-product-development-life-cycle-will-fuel-innovation.

- Shopify. 2025. “Introducing Roast: Structured AI Workflows Made Easy.” June. https://shopify.engineering/introducing-roast

- Woods, David D. 2018. “The Theory of Graceful Extensibility: Basic Rules That Govern Adaptive Systems.” Springer Nature Link .